I heard ‘over qualified’ as an excuse, as if that was a contagious disease. Based on most of the people doing the interviews I don’t think there was any danger of infection.

You stay in your comfort zone to avoid surprises after a few years of nothing but surprises. You just hope to vendors who are willing to stay with what they know how to do well and not head off in ‘an exciting new direction’ when a new CEO takes over and decides to make changes.

]]>a) “I don’t have recent experience” (But since I’m one of the highest qualified engineers in Aus, they have to pay me what I’m worth by industry standards (by law) and that would be over $130k/year + package),

b) “They want someone younger, and with a car” (someone inexperienced enough to be cheap and stupid enough to be the free delivery guy),

c) “You’d have my job in 6 Mths” (No, 3 Months moron!)

And I agree with the above (re: using what you know). I changed over to this new cloud system because I trust Prometeus and they showed me how they tested over the past year, and I was quite content with what they have done. They didn’t launch it until they were as certain as they could be it will work (unlike many companies that would have launched it 6 mths ago and fixed stuff as the customers found the problems). But It’s just a platform. On top, I’ll be running all the s/w I know well. And as much as I hated working for HP, their server/network hardware & OS do work (and I know HP-UX intimately anyway, so may be able to help the guys out if they get a problem).

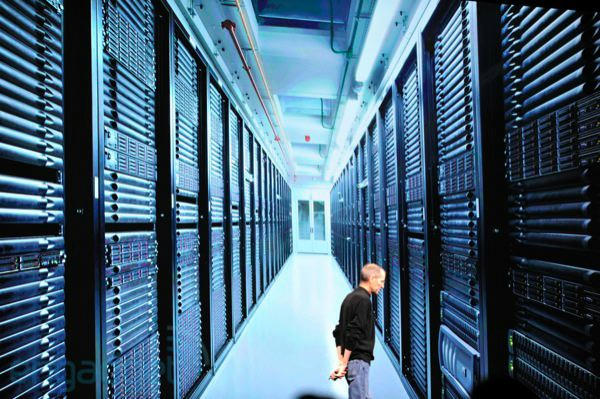

I was glad when Apple finally got out of the Enterprise game and killed the Xserve line in 2011. Should have happened much earlier IMHO! It really was crap! (and I have all the Apple server cirt’s, so I speak from experience). Sure, it had some nice features… but most were poorly implemented! NO hot-swap capability, no redundant PSU, poor power management… etc, etc! Much of the management s/w that was applauded by industry was half-assed, like the LOM (Lights Out Management). It was good, except that it was bound to a single IP and the primary Ethernet port! If that port died or the IP changed… SOL! And so on. I had to laugh when I came across a photo of Jobs (during the 2011 Live Blog Keynote) standing in the new huge Apple data center… Not a single bit of Apple hardware anywhere! It was all HP! 😆

Steve Jobs in Apple Data Center WWDC 2011

Steve Jobs in Apple Data Center WWDC 2011

*sigh* Mind you… I wouldn’t mind having all that hardware! 😉 😀

]]>i can vouch for this model. i have 1.5 “s” degrees (both heavily “m” based) and part of a “t”certificate, and if dean baker is right, i’m making less than minimum wage:

]]>I totally agree – better the problems you know and understand than intermittent weirdness that destroys your credibility and your company. If you already know about the problems, you already know about the work-arounds. One day they’ll ‘fix it’ and there will be a fracture somewhere else in the code. For every fix there is a new and seemingly unrelated problem – if that isn’t a ‘law’ yet, it should be.

]]>Regarding drama, one reason why I went with generic Linux server storage was because I understand the Linux block subsystem at an intimate level and understand how the various layers of RAID, LVM, iSCSI, and filesystem/NAS protocols work from the inside out. There is no drama with generic Linux storage servers, just annoyance at the idiocies that have crept in over the years such as no way to do replication in a transparent fashion (you have to take down a LVM share and layer a DRBD replication layer on top of it to make it happen, then point the filesystem or iSCSI target at the DRBD device rather than the LVM device). I would rather be annoyed than wake up one day to find out that the management volume on my iSCSI block store from XYZ Corp got corrupted and all my volumes are gone with no hope of retrieval. Just sayin’.

]]>The ‘functioning as designed’ is almost an IBM trademark going back to the 360 mainframes. When you have devastation throughout your computer center you really don’t want to hear that the beast was supposed to do that from the IBM support team.

More and more every year you get the feeling that no one is actually testing anything before it is shipped. Customers have become the testers. That is the only way of explaining why things you purchased because you were told they were designed for what you want to do, don’t actually do it, or do it so badly that they are drag on your throughput.

Prometheus are on to something with the open source community, because it is made up of people actually interested in what they are doing, and willing to try to make it work for you, including testing it before they give it to you. I don’t have a problem donating to several efforts because I have certainly paid a lot of money for no return from for-profit vendors.

Good luck, guys.

]]>The VPS Prometeus use are running on Supermicro (twin2 & twinfat’s). But for the new cloud service, it had to be able to scale from the low-end to Enterprise (they have some enterprise clients that want to migrate from traditional VPS to cloud-based). It also had to be able to quickly scale over time. Curiously, while they are paying a small fortune in hardware, all the software is open-source. The HUS 150 SAN (with 120 TB SAS HD’s) cost them 120k EUR last year. And 4 Brocade 340 SAN switches with all ports licensed + 3 yr onsite support w/ 4hr hardware replacement guarantee. Etc. It’s replacing an old Sun/Storagetek system. The HUS 150 is considered Hitachi’s top tier-2 mid-range Unified SAN.

One of the reasons they went with CloudStack they said was because it’s open-sourced under the Apache umbrella and they had very good dealings over months with the development teams. Didn’t get very far with the OpenStack crowd apparently. Prometeus are developing their own control panel for CloudStack, working with the Apache team. 🙂

Prometeus have some info on their implementation of CloudStack if you are interested, on their tech forum:

Prometeus – Tutorials and HowTo’s

I was reading on Ars Technica a hot discussion in tier-2 SAN’s with some interesting horror stories! Buyer Beware, indeed! 😀

]]>I have significant reservations about the V7000.

I offer the following anecdotes regarding our two units that are less than a year old:

1) During a firmware update we experienced a condition where the unit stopped servicing I/O for approximately two minutes. This resulted in windows and Linux guests in our ESX clusters failing writes crashing/corrupting. IBM identified the cause of this and I believe it is fixed in their now current firmware, but it was present in their 6.2 and 6.3.01 code.

2) Under heavy write activity the controller deemed write cache to be ‘too busy’ AND DISABLED IT. The write cache disabled condition again led to conditions where some guests experienced write delays so long that they failed/crashed/corrupted. When this condition occurs, the controller maintains the disabled write cache state for at least one hour before considering re-enabling it. IBM identified the cause of this behavior and considers it to be functioning as designed. No fix is present, or ever planned.

3) IBM introduced a bug in the code in the middle of 2011 which causes a unit which has not been rebooted for 208 days to spontaneously reboot; that could be one or both of the controllers depending on how lucky you get. That bug had been identified and I believe it has been corrected in current code.

Allowing that #1 “could happen to anybody” I would say that #2 and #3 are not the sort of thing that one would expect of ‘mature code’ from a ‘world class vendor’.

Our two v7000 units will be leaving our data center just over one year into their five year contracts. #2 above was a deal breaker, when they told us that it was functioning as designed we concluded that it just wasn’t designed to meet our (relatively modest…) needs.

…

We’re actually in the process of returning a pair of Symantec 5220’s that we did a try and buy on.

The appliances couldn’t even do a backup to tape, when we got done struggling with deduped replication and were told to hold off for the next appliance software update we converted our SLP’s to be simple D2D2T jobs and we had problems with that. We took the jobs off the appliance and put them on our Windows based media server straight to tape without changing anything else and they worked just fine. Symantec support was worthless even with the regional VP of sales breathing down their neck so we finally said screw it, no need to wait for the next software update, if they can’t do a simple backup job how are we ever going to trust them to do something as complicated as deduped replication correctly. We’re currently evaluating what we’re going to use the money that we get returned on and I’m really not sure. Avamar seems out based on the 75GB/hour per node performance (<21MB/s, really that's lower than LTO2 performance). Datadomain with networker is a possibility but we're going to have to do an eval to see how it fits our environment. Finally I'm seriously considering moving to Commvault on 2U Windows boxes, I know it has it's issues with version upgrades, but no product in the backup space is anything approaching perfect.

…And so on! 😆

I am sooooo glad I am out of that game!! HP & Apple were definitely the nails in the coffin of that career path for me.

My data servers are generic Supermicro 12-disk server boxes. My compute servers are HP Proliant servers chosen from our pile of gear because they have very low power usage for a server (they use roughly 2/3rds the power of an equivalent Supermicro 2-disk box) and will hold more memory than the equivalent Dell and Supermicro gear. They’re all running 2.4Ghz quad-core Westmere Xeon chips (two of’em in the compute servers, just one in the data servers) and connected together with 10Gig back end (data) network cards, the front end network is only 1Gig but since most of the work is done on virtual machines that are on the 10Gig fabric, the result is seriously fast. About to get another server box, it’s going to have a pair of 2.4Ghz quad-core Ivy Bridge Xeons in it. Most decidedly *not* the fastest thing ever seen by man, in fact almost the slowest Ivy Bridge Xeons you can get — but cheap, stable, and plenty fast for what we do. The biggest drama I have is when disk drives pop. I got a SMART alert on one yesterday, which then failed out of the RAID array, so I popped it out, popped new one in, saw where it got inserted by Linux, used mdadm to add it to the array, and there ya go, rebuilding away. Not exactly the stuff of drama. Of course, if I’d done like way too many of our customers and ignored the SMART alert, it could have escalated into drama, but I’m not that stupid!

10Gig Base T is now out and looks increasingly affordable. That’s going to be the Next Big Thing… the cards that I used in this particular setup were way too expensive, though all I paid for was transceivers (the cards were in a giant pile of gear that I sorted through over time). So it goes.

I will have to look into Cloudstack. I’m looking for something to make it easier to manage all these virtual machines. Openstack is at the moment looking like it would be a nightmare to implement, so I’m looking for something a bit simpler and more stable. Hmm….

]]>I always preferred no-drama systems to the fastest thing ever seen by man.

]]>